Red-black trees (part 4)

published: Wed, 19-Mar-2008 | updated: Fri, 5-Aug-2016

Last time in this series on red-black trees, we convinced ourselves that leaving the balance of binary search trees to chance was pretty good but not completely optimal. Even if we could guarantee that we added items in a completely random order to our trees (and, to be honest, that's an unrealistic expectation), we would still only get average search times of 1.39 * log2(n), instead of the theoretic log2(n). So we shall have to exploit an "active" balancing algorithm, and the best one we know of is the red-black tree.

So, what exactly is a red-black tree and how does it work? First and foremost — rule 0, if you like, since it's more fundamental than all the others — is that a red-black tree is a binary search tree. There's nodes with keys and items, there's the left subtree of a node being less than the node, there's the right subtree of a node being greater than the node, etc. So you can search for keys in exactly the same way as before. Nothing new there.

It's in the insertion (and deletion, but I'm leaving that until later) of nodes where the quality of redness and blackness comes in. There are four rules for a red-black tree.

- Every node in the tree is either red or black.

- Every external node is black.

- If a node is red, both its children are black.

- For every node in the tree, each path starting at that node to an external node has the same number of black nodes.

The first rule is pretty obvious given the name of the red-black tree, but it's worth stating formally. By the way the colors have no real meaning (red node bad, black node good?), they're just a way to easily distinguish between the two types of nodes in the rules and algorithms that follow. (For those people who like bit-twiddling, all we're saying is that each node needs a single extra bit to distinguish between "red" and "black", or between 1 and 0 if you like.)

The second rule has two parts. First of all it says that we have to use and consider external nodes (if you remember from last time, they're the null links on the edges of the tree), and by the way they're always black. Showing and discussing a red-black tree without these external nodes is not talking about a red-black tree at all.

The third rule tells us that there cannot be chains of red nodes. A red node always has black children, and as a corollary, the parent of a red node — if it has one — is always black. If you like, red nodes are in the minority in the tree, it's all mostly black nodes.

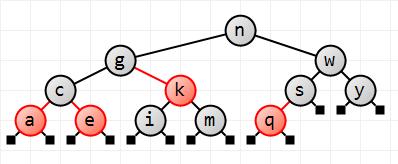

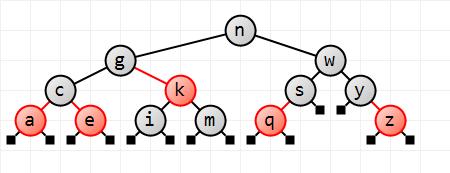

The fourth rule gives us the balancing guarantee. It says that, no matter where you start in the tree, if you follow every path from that node down to an external node you will see the same number of black nodes. This applies to the root of the tree as well, of course. I like to think of the red nodes as being little springs that take up the slack in the paths from root to external node. The red-black tree is not perfectly balanced, but it's good enough and it's the red nodes that give us the leeway to make the tree almost-but-not-quite balanced. In the example image, looking at g, for example, no matter which path you take down to an external node you'll see two black nodes. For s, you'll see one black node, and so on.

The other interesting corollary of rule 4 is that it implies that the longest path from the root to an external node cannot be more than twice the shortest path (it could alternate red-black-red-black all the way down, versus the shortest path which would be black all the way down). Again it's a balancing algorithm — refer back to the previous installment where we regularly saw longest paths that were more than twice the length of the optimal path.

All very well, but so far, it's as if we just made these rules up as we went along. How can they help in reality?

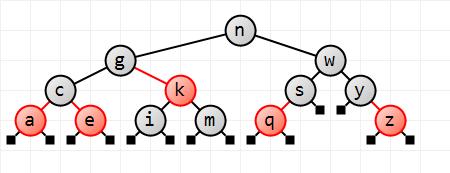

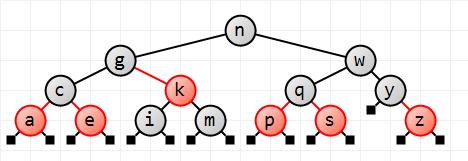

Imagine that we have a red-black tree already set up (the one shown above) and we try and insert a new item, say z. Just as with the binary search tree, we search for the key to be inserted and end up at an external node where the new node will be added, the right child of y. We now have a problem, since we have to obey rule 1: what color should we make it? Red or black? No matter what, its two children are external nodes and are therefore black by rule 2.

If we make the new node black we're immediately in deep trouble with rule 4, since we're replacing a black external node with a black internal node and two black external children. The two new paths through that node will be one black node longer than all the other paths in the tree. If you take a look at the tree, inserting z to the right of y would make the left side of y have one black node in the only path, whereas the right side of y would have two blank nodes in both paths.

So the answer is to make it red. Since we're replacing a black node with a red node and two black children, rule 4 will still be satisfied: the two new paths from the root will still have the same number of black nodes.

However, in doing so, we're possibly going to be in violation of rule 3. If the parent of the new red node is black, as it is with z, everything's fine, we don't have a chain of two red nodes in sequence. If the parent is red though, say we're inserting p, we're in deep trouble. How do we get around this?

Put that intractable problem aside for a moment and go back to the binary search tree. Let's look at an algorithm that manipulates a binary search tree, leaving its ordering intact but altering its local structure. I'm talking about a rotation.

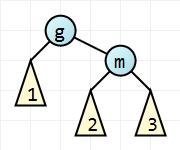

Take the local binary search subtree shown here. I've simplified it greatly by hiding the structure of certain subtrees, and making them triangles instead. We have two visible nodes with keys g and m, and we don't really care what the parent of g is. Subtree 1 has nodes that are all less than g, subtree 2 has nodes that are all greater than g, but less than m, and subtree 3 has nodes that are all greater than m.

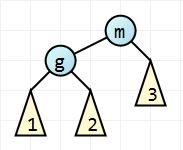

Let's rearrange the nodes so that m replaces g as the parent of this local subtree. Since g is less than m it will have to be the left child of m. But m has a left child already. consisting of all nodes greater than g but less than m. So make this subtree the right child of g instead, since it's lost its right child.

The result is the image shown here. If you look at it carefully you'll see that subtree 1 hasn't changed place and is still in the correct position, subtree 2 is now g's right child meaning that it should contain nodes that are all greater than g (which it does). It also can't contain any nodes greater than m (which it doesn't). Subtree 3 hasn't moved either and is still in the right place. So this local structure sill obeys the ordering rules of a binary search tree. The parent of this local structure doesn't care about the reordering, since we haven't brought any other nodes in from the outside or, for that matter, lost any.

Consider now though how the local structure of the tree has changed. Subtree 1 is lower than it was, and subtree 3 is higher. We've rebalanced the tree. Since we didn't comment on how deep the subtrees were, we can't say whether we improved or worsened the balance, but we certainly did rebalance it.

This tree manipulation is known as a left rotation. We rotated nodes g and m anti-clockwise, or to the left.

And I'm sure by looking at the before and after pictures that you can see what a right rotation is (the after picture becomes the before picture for a right rotation, and the before picture becomes the after picture). A left rotation and a right rotation are equal but mirror images of each other.

I would advise you to look back at this description and the images and convince yourself that they work. (By "work" I mean that they don't alter the ordering properties of a binary search tree.)

So now that we have rotations under our belt and can restructure a binary search tree with impunity, how does that help us with a red- black tree? What happens if we apply a rotation to a red-black tree?

Well I don't know about you, but my first thought is that we'll really muck up rule 4. Let me define the black-depth of a node as being the number of black nodes you'd visit in getting to an external node. So, in this image, suppose node m has black-depth x. Then all paths through subtrees 2 and 3 would have x black nodes. g therefore has black-depth x+1, one greater than m's, and so that means that all paths through subtree 1 have x+1 black nodes.

Apply a left rotation and suddenly g has black-depth x+1 if we go through subtree 1, and black-depth x if we go through subtree 2. m is even worse: it'll have three possible black depths depending on which subtree you go through. As I said: a left rotation really mucks you up with regard to rule 4.

But, what if one of the nodes were red? Say g were red and m black. m has black-depth x (so all paths through subtrees 2 and 3 have x black nodes), g would still have black-depth x+1, and a left rotation still messes us up.

Now, on the other hand, suppose the nodes were colored the other way round, g black and m red. All three subtrees would contain paths that had x black nodes, and both g and m would have black-depth x. Apply a left rotation now and for a moment it seems as if all is still lost, until we suddenly realize that recoloring g and m immediately after the rotation preserves rule 4. In fact, if we consider the before state for a right rotation a mirror image of the before state for a left rotation, it becomes obvious that we should recolor the nodes after a rotation.

And that's how to do a left and a right rotation in a red-black tree. It only applies in the narrow case where the parent is black and one of its children is red. If that's the case we can rotate left or right with impunity. It does not apply if both children are red, or children and parent are all black, or the parent is red and the children are black.

So let's go back to our insertion problem. What if the parent of our new node is red? We're inserting p to the left of q, remember. Well, we move up the tree to its parent (which has to be black), and we apply a left or right rotation, and our problematic red parent node will change color in doing so, and our problem will just disappear in a puff of smoke. Abracadabra. Way cool.

Until we find that our new parent is red, its parent is black, and its sibling is also red; for example, trying to insert d. We can no longer apply a rotation. Hmm.

Another situation. Look at the red-black tree before we added p. Try and add r to the right of q and apply the same rotation trick: you'll see that after the rotation r, which is red, will be the left child of s, also red. No matter now we rotate q and s, we're left with the same problem: a red r attaching to a red parent. Obviously there's more to this insertion stuff than we imagined. So what shall we do?

For a start we'll end this installment, leave you breathlessly hanging on the edge of your seats, and reveal all next time. Always leave your audience wanting more when you reach the end of a chapter, my old English teacher once said, and a truer word was never spoken. So, until next time when we'll finish up the insertion story, happy rotations.

There is no new code with this installment.

[This is part 4 of a series of posts on red-black trees. Part 1. Part 2. Part 3. Part 5. Enjoy.]